|

12/21/2023 0 Comments Url extractor software To get even more data (server emojis, role icons, and more), -parse-websockets. (There shouldn't be any, but I'm not perfect.) On *nix you can use sort -u or uniq. Use a program to get rid of any duplicate URLs.Run cargo r messages.jsonl discard2, assuming you named the file in Step 2 messages.jsonl.Do not name that file urls.url or ignores.url. Use the raw-jsonl reader from discard2.⚠️ These steps have changed! (The old steps will still work, but the new ones are simpler and get more URLs.)

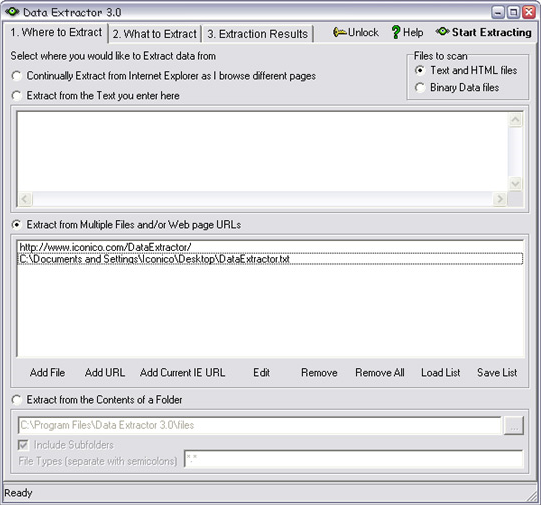

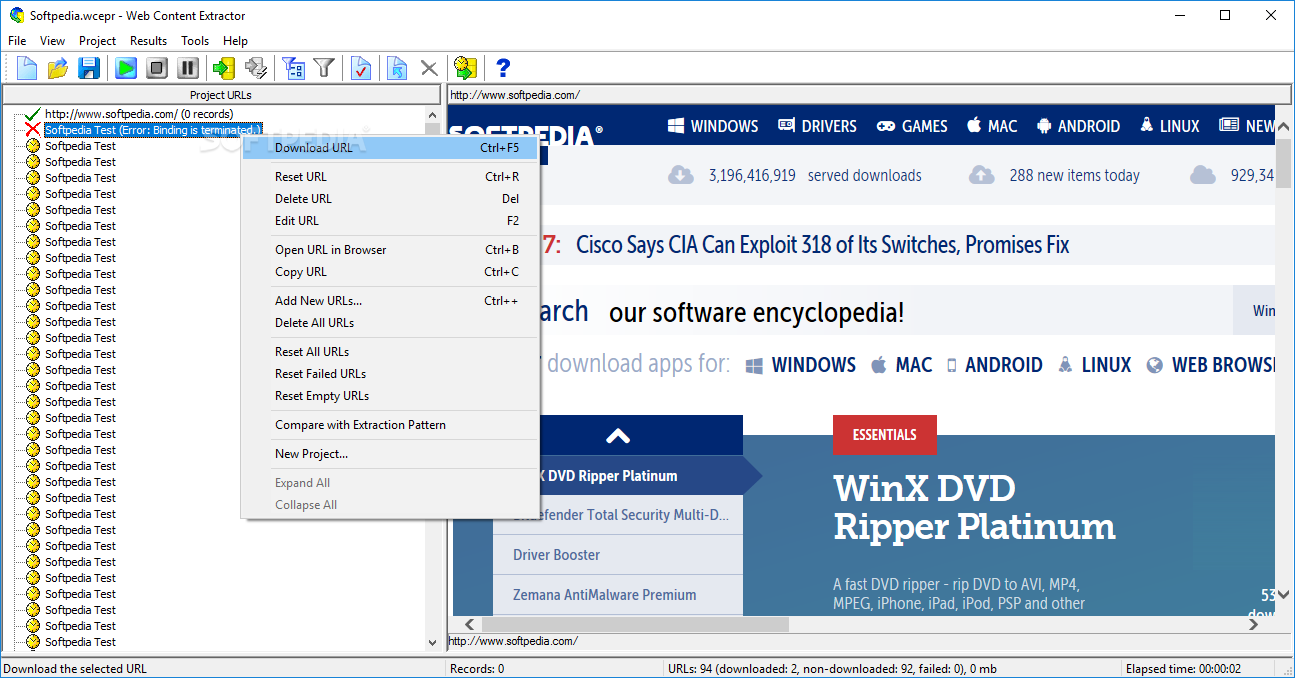

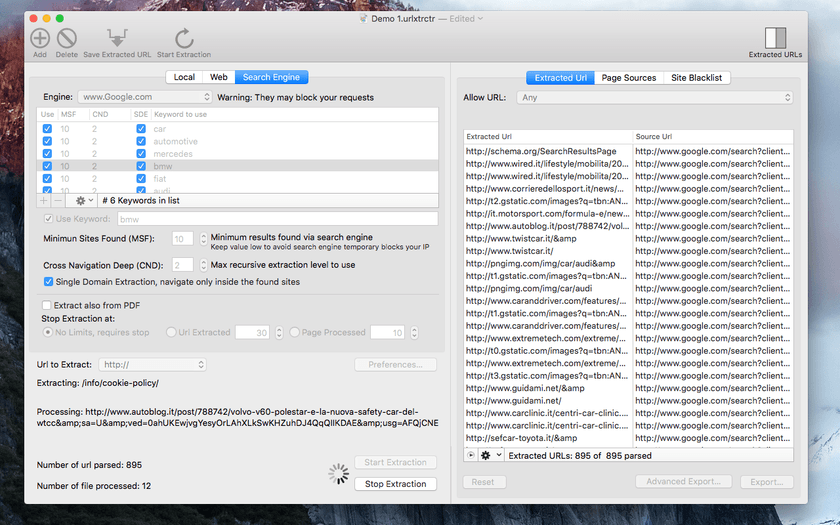

Unless, of course, you have the exact same path as me - in which case, twinsies! Usage with discard2 Obviously replace the file path with the actual one. Of course, if the file path has spaces, pad it with quotes ( ") if your shell requires it.Įxample: cargo r /home/thetechrobo/Discordbackups/dsicord_data/SteamgridDB/SteamGridDB.dht dht It does not look through embeds or any other feature. It only supports extracting attachment urls (which can now be done via DHT!) and finding URLs in messages using a regex (and getting avatar urls). I'll fix bugs, but it doesn't support embeds or any new DHT features. ⚠️ Note that the DHT extractor is pretty much unmaintained since I no longer use it. That's a recipe for turnabout disaster.) Usage with Discord History Tracker (DESKTOP APP ONLY) (Also, I think this goes without saying, but don't run the app multiple times at the same time in the same folder. As such, don't modify ignores.url while the script is running - your changes will have no effect, and when the script finishes they will be overwritten! ignores.url is read into memory, new URLs are added to it, and then ignores.url is overwritten with the loaded values. It's also helpful if you have previously used other tools to scrape URLs - just add the URLs you scraped to ignores.url and they won't be scraped again! It's not perfect, but it should work 99% of the time. This allows you to run the script on different scrapes without having duplicates. Tip: ignores.url is a list of URLs that should NOT be extracted. A good idea is to use a separate directory for each scrape (Cargo's -manifest-path can help with that) or back up the urls.url file before you run the script. ⚠️ Every time you run the script, it will overwrite urls.url with the URLs from the current script. I don't want to be your partner in crime, believe it or not.) But you can use the URL lists for whatever you please! (Except DDoSing. InformationĬreated for injesting URLs from DHT scrapes (and now discard2 ones, too!) (oh wait: now DiscordChatExporter ones, three!) into the Archiveteam URLs project. I'm actually using this program in production! Updates are made when I'm interested, or when they're necessary. Vovsoft URL Extractor supports file masks to help you filter the files.Discord-urls-extractor Maintainence Level: Updated as I need it. All you need to do is select the files you want the application to analyze and press the «Start» button. All the options are clear and simple and they all can be placed within the one-window interface. The software scans an entire folder for files that contain links and displays them all within its main window, allowing you to export the list to file. Fortunately Vovsoft URL Extractor can help you in this regard when you need a URL scraper software. It can be hard work to browse all the folders and scrape the web links.

Sometimes we need to grab all URLs (Uniform Resource Locator) from files and folders. You only need to provide a directory, as the program can take care of the rest. Once installed, you can start the application and begin searching for links almost immediately.

You can extract and recover all URLs from files in seconds. Vovsoft URL Extractor is one of the best programs that can harvest http and https web page addresses.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed